Mario games since N64 have often used advance lighting effects. Sometimes incorporate it into level design gimmicks. I can see Nintendo using some RT for a level in the next Mario game.

Bite my shiny metal cockpit!

Mario games since N64 have often used advance lighting effects. Sometimes incorporate it into level design gimmicks. I can see Nintendo using some RT for a level in the next Mario game.

Soundwave said:

I do wonder if a future version of DLSS5 (I really wish they had given this a unique name) if you'll be able to feed it data on what you want the final result to look like to iron out that problem. Like if the the algorithm could understand that you want Donkey Kong to look like this (which is art from your own artists). Particularly with the fur, then maybe it can understand and zero in on doing that up from a model that actually has a rendering cost of this:

|

Even this DK render is well below the Switch 2 capabilities. It's just a case of the team actually building an asset from the ground up for Switch 2 and utilising the right tech/rendering techniques. If Nintendo is not implementing DLSS2 at the moment to free up resources, I don't think there's much use in speculating their use of DLSS5 lol

Otter said:

Even this DK render is well below the Switch 2 capabilities. It's just a case of the team actually building an asset from the ground up for Switch 2 and utilising the right tech/rendering techniques. If Nintendo is not implementing DLSS2 at the moment to free up resources, I don't think there's much use in speculating their use of DLSS5 lol |

DK Bananza was a Switch 1 game that was moved to Switch 2 (probably late into dev) so that explains a lot on that.

Nintendo even showed the Switch 1 version here:

I think they will definitely use DLSS5/6/7 whatever by the time Switch 3 is out, people doubted the Switch 2 would use DLSS too in the early days and how'd that go.

The fact that you can just offload most of the lighting compute to Tensor cores/NPU cores alone will be a huge benefit to a Switch 3. No point in bothering with path tracing/ray tracing really if you just just have that done on Tensor cores and not bog down the GPU with that. The lighting side of it will likely get better with future iterations too, this is just the first attempt. Right now it looks like the DLSS5 algo is trained to basically add perfect Hollywood style lighting to each scene (so a key light, fill light, back light, and I even notice an eye light too) and for some people that's jarring, they can likely adjust this for future revisions, though I think some people do like this look even as is.

How they feel about actual generative AI over art assets themselves ... that could be a different story, I think they'll be initially cautious about using that but eventually probably will cave on that too. It really depends on what the broader industry does. They have a few years now to just kinda sit back and see how this whole thing shakes out, Switch 3 you're probably talking about a chip decision on that still be 3-4 years out from today (2030), by then Nvidia will be well past the 50 or even 60 series and you'll probably have DLSS7 or something that is a lot better than DLSS5.

Last edited by Soundwave - 11 hours ago| Cerebralbore101 said: So after looking into it, so much of this stuff isn't needed for Nintendo 1st party games. Cartoon looking graphics don't need ray tracing at all. I could see benefits from neural textures but it's one thing to tell an AI model to make a photorealistic stone texture on the fly, and asking it to texture anything organic and complex. Yeah take 1000 pics of a glass or stone or wood object to train AI to make a texture for a material in game. That's a good use of AI. Same goes for saving ram space. Or figuring out how to light a jewel in real time. But having it do what it does to RE Requeim or Hogwarts characters is horrible. And for Nintendo, their asset library is massive already. Why use AI to make a hyper realistic brick texture when some artist did it good enough for a cartoon artstyle in 2016 and you still have that asset? |

"Need" is a really odd word to use for game development.

Technically video games aren't a "need" at all. They're luxury goods. Developers don't "need" to improve visuals or performance at all. Games could have probably stayed at the 7th Generation level in terms of genre diversity and gameplay mechanisms.

Having said that, ray tracing definitely can benefit "cartoony" games. There are many beautiful CGI animations that are beautiful because they use ray-tracing, as an example. Accelerating it with an algorithm (that's all "AI", in this context, is) isn't that big of a deal really.

My point though is that DLSS 5 is not the full extent of neural rendering. Neural rendering is much more than that, and Nintendo is almost certainly going to use it in its development. DLSS 5 might arguably "draw over" assets, but Nvidia (and AMDs, UE5's, Intel's, etc) suite of features don't all do that. Some help accelerate rendering so that more high-quality assets can run on hardware that they couldn't have.

If ones opposition to "AI" is quality of the output, then that is almost certainly going to improve over time, and probably will be in a good place by SW3's release a decade-ish out from now.

Soundwave said:

It's not rendering anything, it's just an AI algorithm trained to be able to write over things like faces, lighting, materials, etc. and to quickly be able to alter the 2D image and "overhaul" the final output image you see on the screen. It's probably more accurate to call it "image manipulation done at extreme speed" (30 fps+). |

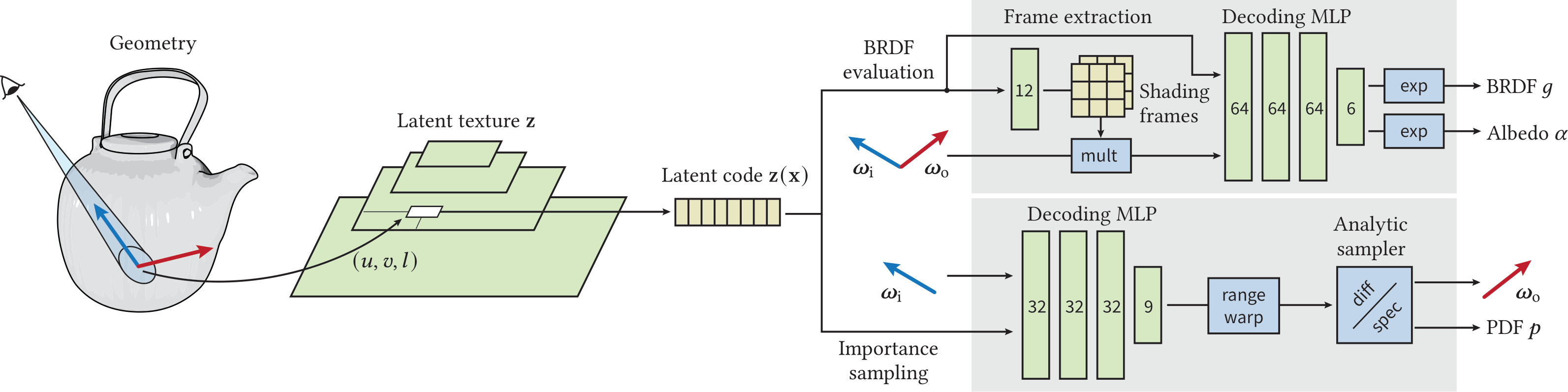

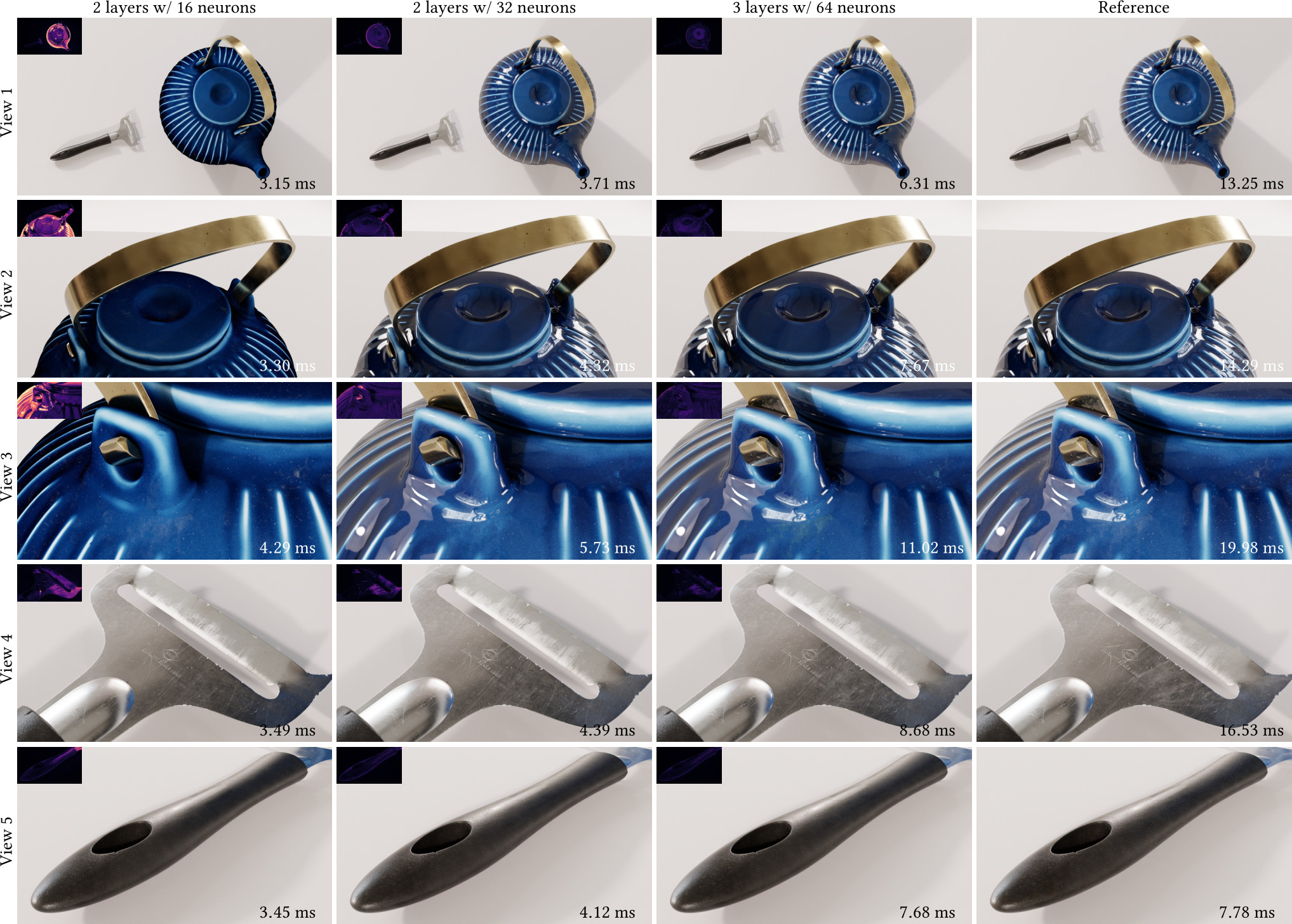

DLSS 5 is indeed a post-process effect, but all of these other features are directly part of the render pipeline and aren't post-process effects. In the case of neural materials, as an example, small MLPs tweak the material properties of specific materials to maintain a high quality without performance penalties. This happens far before the image is finally processed and output to the screen.

https://research.nvidia.com/labs/rtr/neural_appearance_models/

|

Our appearance model utilizes learned hierarchical textures that are interpreted using neural decoders, which produce reflectance values and importance-sampled directions. To best utilize the modeling capacity of the decoders, we equip the decoders with two graphics priors. The first prior—transformation of directions into learned shading frames—facilitates accurate reconstruction of mesoscale effects. The second prior—a microfacet sampling distribution—allows the neural decoder to perform importance sampling efficiently. The resulting appearance model supports anisotropic sampling and level-of-detail rendering, and allows baking deeply layered material graphs into a compact unified neural representation. |

The input is the actual sampled mesh and shading data and the output are material properties.

curl-6 said:

The thing is though, sometimes democracies vote in authoritarianism; its not hard for popularist demagogues to persuade enough of the population to hand them power, as we've seen many times throughout history. If anything, the last decade in particular has shown us that governments, big tech, and the elites don't give a shit about the wellbeing of the masses and will use every means they can including AI to monopolize power and wealth as the common people's expense. |

A big part of populism in the coming years will be a push to regulate AI and make sure the systems aren't mainly in the hands of billionaires and AI companies. In general though we can probably just leave this here for now since I feel we've kinda said everything we have to say on the subject for now. I'm optimistic about it long term but we can agree on the part that there's likely gonna be a rough stretch. More than anything my original point here was showing that there's more than just a few positive applications of AI since the technology is already powerful enough to be very broad in its applications.

I still don't want more AI because of the water wasted to cool the data centres. Not to mention the chemical added to said water can't be removed, and because of the emissions from the data centres said chemical is going into the atmosphere and rain, hurting animals and humans alike.

How do you avoid those problems?

sc94597 said:

"Need" is a really odd word to use for game development. Technically video games aren't a "need" at all. They're luxury goods. Developers don't "need" to improve visuals or performance at all. Games could have probably stayed at the 7th Generation level in terms of genre diversity and gameplay mechanisms. Having said that, ray tracing definitely can benefit "cartoony" games. There are many beautiful CGI animations that are beautiful because they use ray-tracing, as an example. Accelerating it with an algorithm (that's all "AI", in this context, is) isn't that big of a deal really. My point though is that DLSS 5 is not the full extent of neural rendering. Neural rendering is much more than that, and Nintendo is almost certainly going to use it in its development. DLSS 5 might arguably "draw over" assets, but Nvidia (and AMDs, UE5's, Intel's, etc) suite of features don't all do that. Some help accelerate rendering so that more high-quality assets can run on hardware that they couldn't have. If ones opposition to "AI" is quality of the output, then that is almost certainly going to improve over time, and probably will be in a good place by SW3's release a decade-ish out from now. |

Nvidia did mention I think that DLSS5 can be applied to games like Minecraft even and have notable visual uplift, so yeah it would certainly help Nintendo even with "cartoony games".

Even if they only use it in lieu of using real time path tracing, the performance benefit from that alone could be massive. Giving developers a third option between spending tons of time/effort with baked lighting or having to go down the ray traced lighting/path tracing/Lumen avenue that takes up a ton of hardware resources will be a game changer for what the Switch 3 can do because you're taking probably the most GPU intensive task (real time lighting) and moving it off to an AI algorithm using the Tensor cores instead at likely a much lower compute cost. You just need to make sure you have enough CUDA cores to make DLSS5 work.

By the time the Switch 3 chip is even chosen you're probably talking about DLSS7 or 8 or something too, something that will be well beyond DLSS5.

Last edited by Soundwave - 2 hours ago

| CaptainExplosion said: I still don't want more AI because of the water wasted to cool the data centres. Not to mention the chemical added to said water can't be removed, and because of the emissions from the data centres said chemical is going into the atmosphere and rain, hurting animals and humans alike. |

Some of these things are real concerns. They're not inherently AI driven though.

You don't need water cooling for AI. You can run an AI on a modest gaming computer.

But corporations are trying to run mega models that are 100x bigger and require very ridiculously specialized hardware.

DLSS 5 isn't harmful in really any of the ways, you're talking about. It's harmful in other ways.

the-pi-guy said:

Some of these things are real concerns. They're not inherently AI driven though. You don't need water cooling for AI. You can run an AI on a modest gaming computer. But corporations are trying to run mega models that are 100x bigger and require very ridiculously specialized hardware. DLSS 5 isn't harmful in really any of the ways, you're talking about. It's harmful in other ways. |

Well so far DLSS 5 hasn't killed anybody at least. We've already had Iranian civilians killed because some brainless AI program marked them as military targets.

Making those mega models will be a mistake, one way or another. Say goodbye to being able to afford a house.