freedquaker said:

Here is the thing, I agree with pretty much everything you said, so my arguments will be about trying to shed a light on where exactly I am coming or what perspective I am looking at it from... I have narrowed it down to the highlighted points above...

a) I know I was comparing the system RAM to all of the RAM on the consoles, but hey I did what you suggested also several times, it doesn't change anything, other than altering the numbers slightly. I was saving myself some time from this extra chore. The important thing there is that, whether it be with or without the video RAM, the relative amount of RAM on consoles had never been this abundant. So in terms of memory, in comparison to the PC, this generation is incredible. Again this is not actually a comparison to the PC, but a comparison to the earlier generation playstations compared to the PCs of their generation (so I take the PC as a yardstick for relative performance).

|

Altering the numbers slightly?

If you have a PC with 4Gb of System Ram and 1Gb video card, you are omitting an extra 25% of the systems memory, that's not insignificant, that's just only half as severe as claiming the Playstation 3 only has 256Mb of Ram.

Saving yourself time, made your post factually innacurate in order to skew the results.

Besides, I do agree that Ram wise the consoles have never been this close to the PC, but the rest of the system has also never been so sub-par and behind, there is a *massive* gap.

Ram won't make up for performance deficits, it doesn't do any form of processing, that's something that seems to escape many people in these forums who grab a number and run with it in order to win an argument.

freedquaker said:

b) When I was saying that the games were not made with this in mind, I was referring to the new technologies such as HUMA in the next consoles, rather than GDDR5 RAM. but it's also likely that designing a game for slow RAM and then extrapolating to fast RAM will not yield the same results as designing it for the fast RAM from the scratch, which will effect the game design etc. Basically all console-specific (idiosyncratic) features will improve the performance.

|

HUMA isn't a solution to sub-par performance due to the lack of hardware resources.

HUMA is also merely a stepping stone for AMD's ultimate eventual goals with it's Fusian initiative.

freedquaker said:

c) I am not saying MS should have designed a PS clone at all. They tried to scale up the X360 design, and while the RAM increased 8 folds, the ESRAM increased only 3.2x which came at the expensive of compute units etc, crippling the machine. They were obviously very near-sighted and couldn't see the performance they'd get. If they had taken a non unified architecture, with 4 GB DDR3 + 4 GB GDDR5 or something like that (which is nothing like PS4 but rather like PS3), they wouldn't have to sacrifice any performance at all. The main problem today is not actually the 40-50% raw performance deficit (to some extent yes but not the major bottleneck), it's the slow RAM and too small of ESRAM, that's dragging the system down.

|

Bundling two different memory types would have driven up costs.

Consoles are cost sensitive devices, they only have a limited budget to work with.

Sony's gamble simply paid off, they would have ended up with only half the amount of Ram if higher density memory modules didn't get released in time, which was a gamble that Microsoft was obviously not willing to make.

freedquaker said:

The Impact of CPU, with the exception of heavy AI and physics is small, especially with right to the metal programming, extremely low levels of CPU calls, and parallelizable architecture. I am sure you are aware that AMD's Kaveri (very similar to PS4 but with half the cores) outperforms pretty much all high end intel CPUs in gaming although they are much beefier CPUs and AMD cores don't take advantage of any specific metal to the bone architecture, like mantle which appears to increase performance up to 54% in CPU bound scenarios (which is the relevant here). That is of course, unless you have been living under the rock for the last few years, and unless you think you know way better than Sony or Microsoft than their own machines, and what kind of CPU they'd need.

|

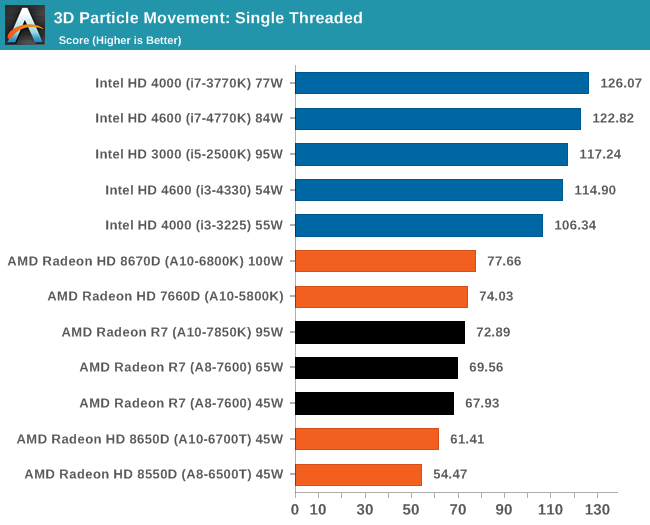

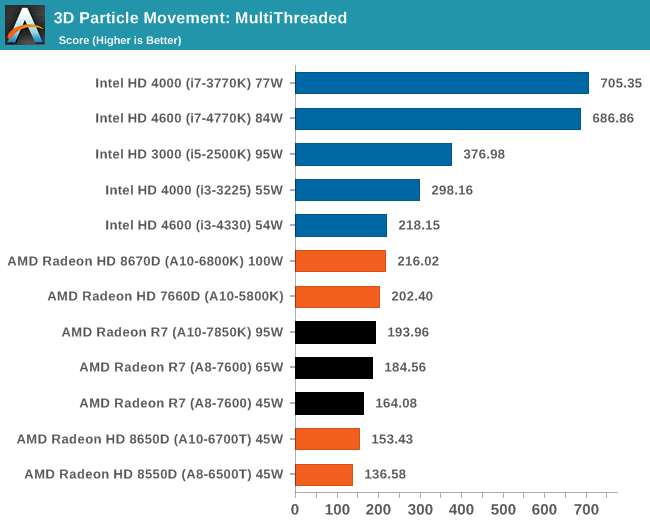

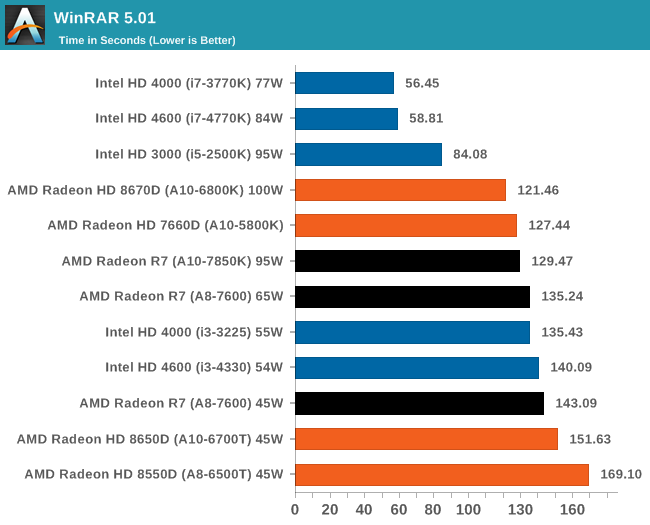

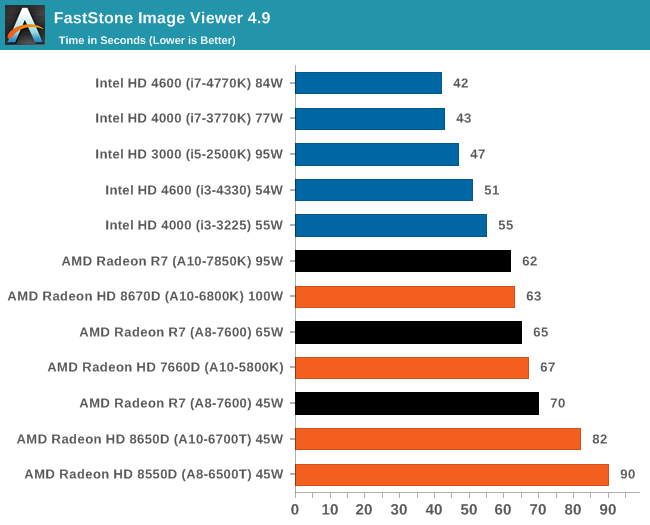

Kaveri doesn't out-perform all of Intel's high-end CPU's.

The high-end being Socket 2011.

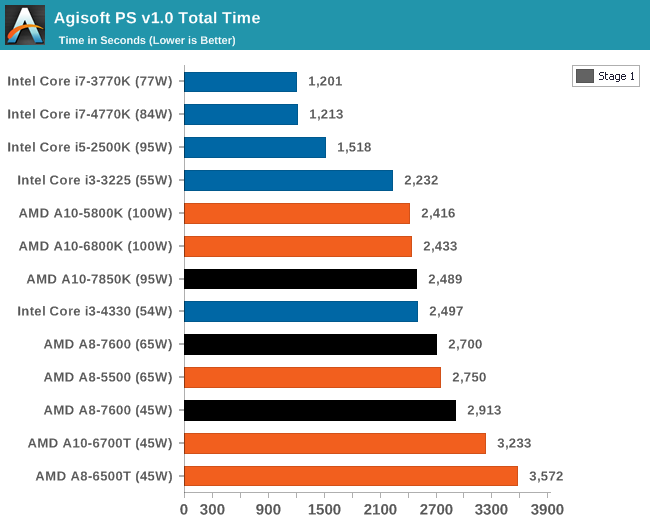

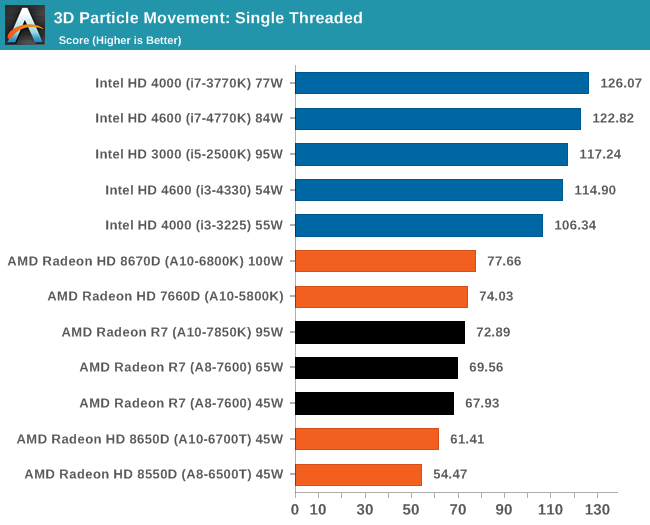

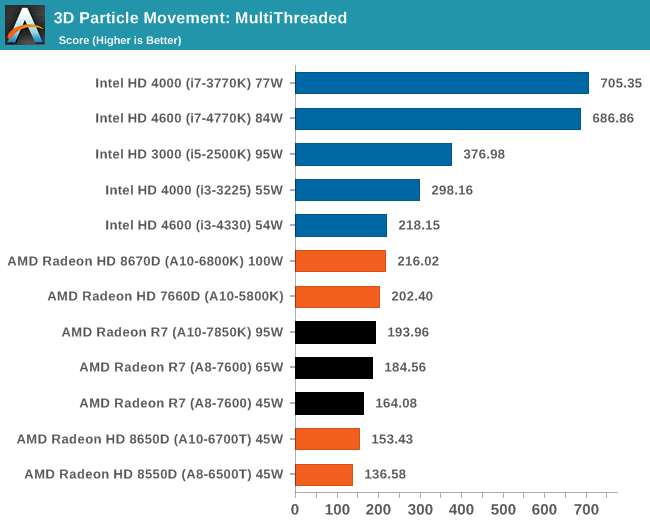

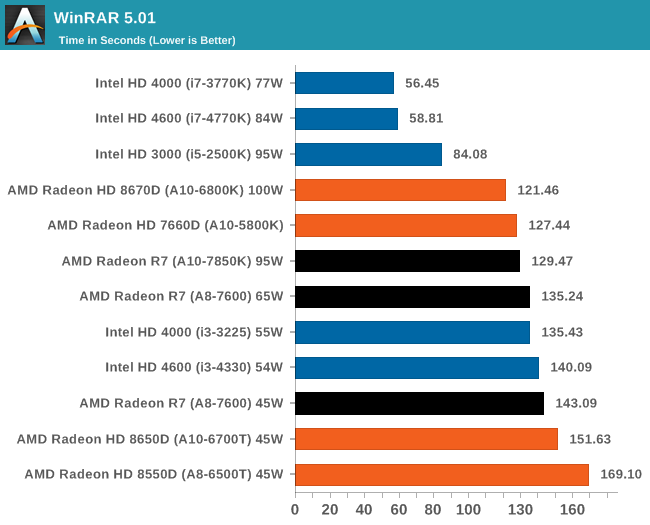

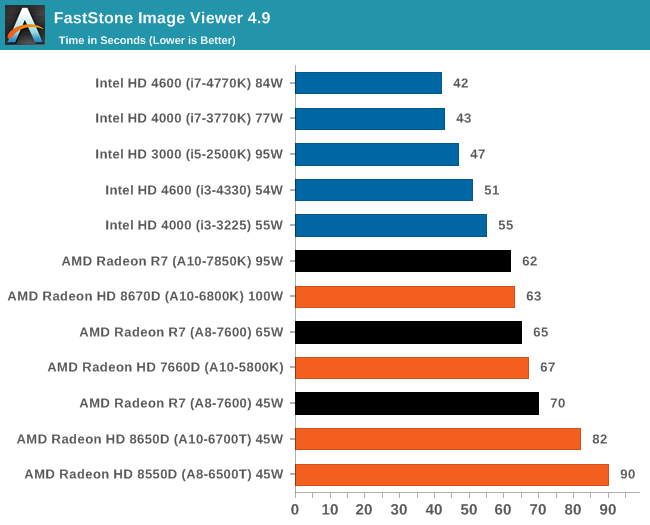

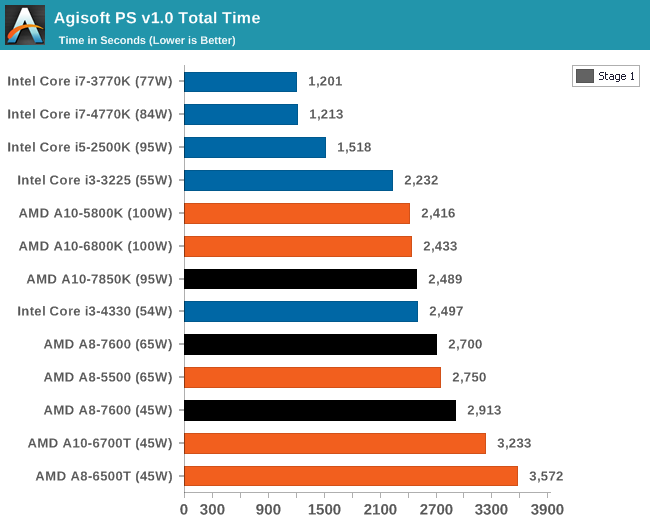

The Black bars in the charts are Kaveri. Note how potent the mid-range 3 year old Core i5 2500K is.

Now the take away from this is, when-ever something uses plain-jane sequential CPU cycles, Intel still has a massive lead. (It should also be noted, this only includes the Mid-range quad cores, rather than the high-end Hex cores.)

AMD can beat Intel when something can leverage the GPU to assist in processing, thus by extension an Intel Quad, paired up with a GCN GPU would still put Kaveri to shame.

Fact of the matter is, for decades none of the console manufacturers have ever taken CPU performance seriously and why would they? The average joe only cares about graphics, it's a massive selling point and the consoles are built to match such expectations.

The PC offers a no-compromise gaming solution in terms of fidelity, consoles can't match 1440P, 1600P, 4k or eyefinity if you're willing to pay for it.

I'm afraid these days 720P and 1080P are low-end resolutions that I expect out of a mobile phone, not a powerfull gaming device.

freedquaker said:

And hey, do you also remember that the original Xbox used a celeron CPU (customized cheap Pentium 3 variant), which was ridiculed by the industry while Xbox still managed to produce much better graphics than not only both PS2 and Gamecube but also most PCs with hig end CPUs at the time!.

As a reference, when Xbox was released with a ridiculous 733 Mhz Celeron processor, new generation Pentium IIIs at 1.4 Ghz as well as 2 Ghz Pentium IVs (Williamette) were already released (practically triple the performance of Xbox CPU). In those days, where the CPU performance increased rapidly, and 733 Mhz Celeron was barely enough to play DVD! So that extra CPU muscle was vital. Even then it was sufficient for Xbox to produce great games with that kind of CPU!

|

The origional Xbox didn't use a Celeron.

It used a Pentium 3 derived processor, but with half the L2 cache.

If it was a Celeron it would have had half the cache associativity, which would have knocked the CPU performance by a good 10% or so downwards.

As for the clockspeeds, A Pentium 3 Tualatin running at 1.4ghz is faster than the Pentium 4 Williamette, provided it wasn't something bandwidth intensive that gave the Pentium 4's quad-pumped bus an edge.

Back when the origional Xbox was launched I had a Pentium 3 667 at the start of the generation and I was playing most of the Xbox multi-plats without breaking much of a sweat including Halo, eventually I did upgrade to an Athlon Thunderbird however in preperation for games at the time.

Before that I also had a Cyrix PR300+ which was to put simply... One of the worse CPU's of all time, slower than a Pentium 2 300, guess what? In theory it could not only handle DVD's but also Blu-Rays. (Provided you copied the Blu-ray to a Hard Drive first as USB 1.0 probably isn't fast enough to Stream.)

The reason being is that like the origional Xbox, PC's these days have dedicated hardware blocks in the GPU to assist with playback of movies.

For instance, my single-core 1ghz Intel Atom tablet doesn't have a decoder in the GPU block, so I installed a Broadcom Crystal HD chip in the Mini PCI-E slot to perform that action.

1080P Movies wen't from unplayable to perfectly playable, without touching on the CPU usage.

Older computers lacked such functionality, with a couple of exceptions like GPU's from Matrox or the ATI All-in-wonder cards and a few S3 and 3dfx cards that targeted those niche's back then.

Back then GPU's looked like this anmd you can see the dedicated decoder and TV handling part of the card:

@TheVoxelman on twitter

@TheVoxelman on twitter