Conina said:

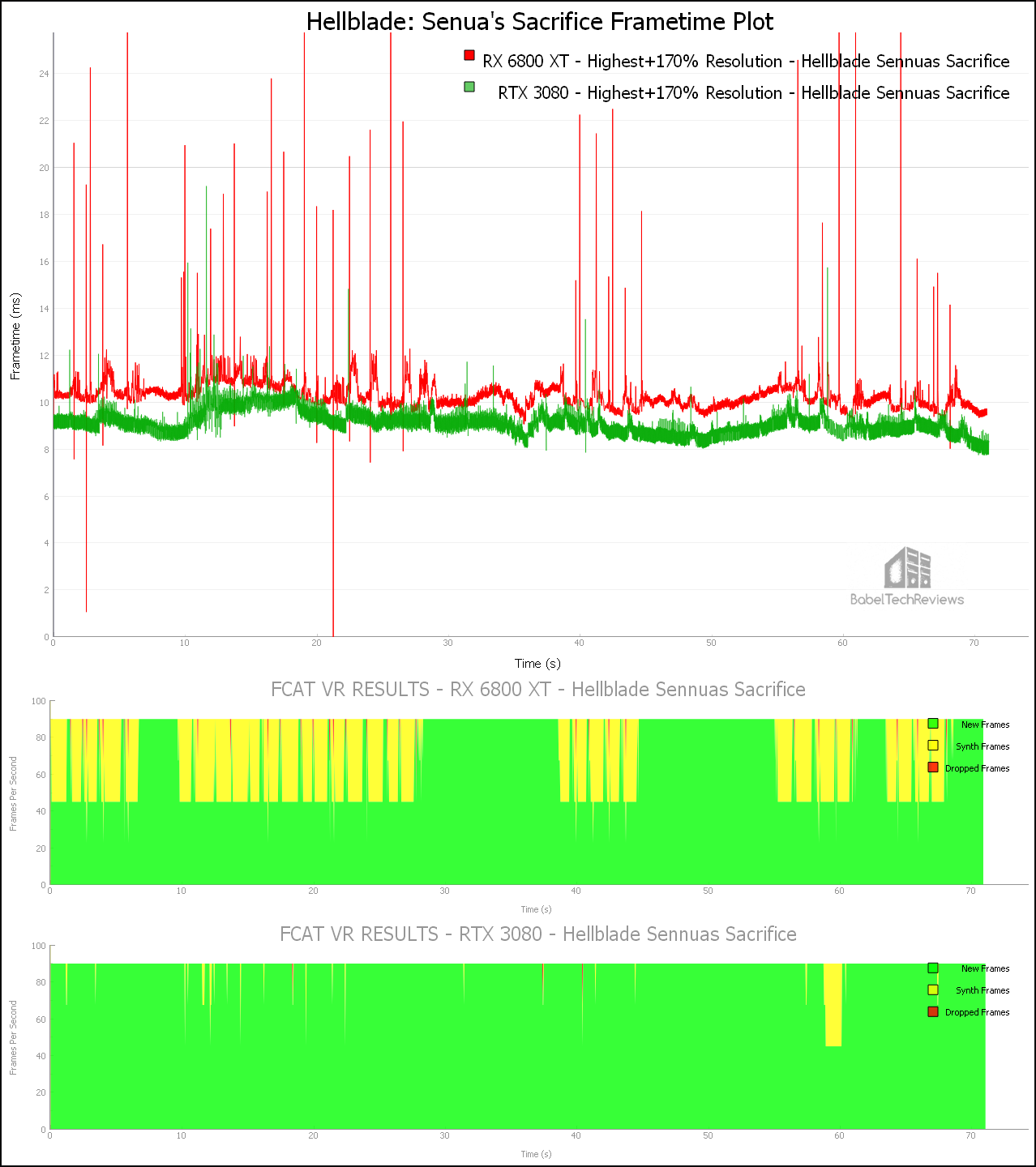

Frame reprojection to keep framerates steady at 90 FPS (or 80 FPS or 120 fps, depending on the VR headset). If a GPU can output a frame every 11 milliseconds, 100% would be "new frames" (green). If some scenes are more complex and the game needs 11 - 22 milliseconds, a whole frame will be dropped and the user sees 22 milliseconds the same image without adapting to his changed head position. If the GPU needs 22 -33 milliseconds, two whole frames will be dropped and the user sees 33 milliseconds the same image without adapting to his changed head position. There are some similar frame reprojection methods for PSVR, SteamVR, OculusVR and some other VR platforms, f. e. ASW (asynchronous space warp) for the Oculus devices.They deliver a smoother VR experience. If the GPU needs 11 - 22 milliseconds, the missing frame will be replaced by the same frame as before BUT adapted to the head movement. But it needs also a few milliseconds for that reprojection and if there ain't enough time, the frame will be dropped instead of reprojected. Dropped frames are more noticeable than synthetic frames. |

So the Radeon doesn't produce such synth frames yet, but it's not a hardware problem per se and rather something that could be fixed with a driver update, if I understand correctly?

The Nintendo eShop rating Thread: http://gamrconnect.vgchartz.com/thread.php?id=237454 List as Google Doc: https://docs.google.com/spreadsheets/d/1aW2hXQT1TheElVS7z-F3pP-7nbqdrDqWNTxl6JoJWBY/edit?usp=sharing

The Steam/GOG key gifting thread: https://gamrconnect.vgchartz.com/thread/242024/the-steamgog-key-gifting-thread/1/

Free Pc Games thread: https://gamrconnect.vgchartz.com/thread/248138/free-pc-games/1/