Its like this whole site is about the wiiU hardware now... Jesus... (Least in terms of the most popular posts anyway)

PC Specs: CPU: 7800X3D || GPU: Strix 4090 || RAM: 32GB DDR5 6000 || Main SSD: WD 2TB SN850

Its like this whole site is about the wiiU hardware now... Jesus... (Least in terms of the most popular posts anyway)

PC Specs: CPU: 7800X3D || GPU: Strix 4090 || RAM: 32GB DDR5 6000 || Main SSD: WD 2TB SN850

| Jizz_Beard_thePirate said: Its like this whole site is about the wiiU hardware now... Jesus... (Least in terms of the most popular posts anyway) |

If were megafenix were gone this place would be a better site by now ...

binary solo said:

So actually 70GB/s then. |

actually no, way to low or what renesas and shinen have said, and that aint enough for achieving 720p with just 7MB of edram when the xbox 360 has to use 10MB

here

http://developer.amd.com/resources/documentation-articles/articles-whitepapers/opencl-optimization-case-study-fast-fourier-transform-part-ii/

"

OpenCL™ Optimization Case Study Fast Fourier Transform – Part II

Local memory or Local Data Share (LDS) is a high-bandwidth memory used for data-sharing among work-items within a work-group. ATI Radeon™ HD 5000 series GPUs have 32 KB of local memory on each compute unit. Figure 1 shows the OpenCL™ memory hierarchy for GPUs [1].

Figure 1: Memory hierarchy of AMD GPUs

Local memory offers a bandwidth of more than 2 TB/s which is approximately 14x higher than the global memory [2]. Another advantage of LDS is that local memory does not require coalescing; once the data is loaded into local memory, it can be accessed in any pattern without performance degradation. However, LDS only allows sharing data within a work-group and not across the borders (among different work-groups). Furthermore, in order to fully utilize the immense potential of LDS we have to have a flexible control over the data access pattern to avoid bank conflicts. In our case, we used LDS to reduce accesses to global memory by storing the output of 8-point FFT in local memory and then performing next three stages without returning to global memory. Hence, we now return to global memory after 6 stages instead of 3 in the previous case. In the next section we elaborate on the use of local memory and the required data access pattern.

"

the wii u edram is like a big cache, so if the gus from hd5000 series haveb 2TB/s of bandwidth for each simd core, why 563GB/s sounds so much for the edram?

fatslob-:O said:

If were megafenix were gone this place would be a better site by now ... |

if fatslob-:O was gone this would be a nicr place out of lies, trollings, etc

well, maybe trollings would still exis but a much lesser degree

ther is no point talking to him cause he only hears himeself and when you show him prove he justs plays fool and doesnt even check it, he is boring, not entertaining

already privided proves but he aint answer my questions

megafenix said:

remember that the edrm on wii u works as a big cache not as big ram do you even know how much bandwidth the internal parts of even a low end gpu can handle?

here

and thats only for one local data share, each simd core has a local data share, so, how much bandwidth would you get for a gpu of marely 4 to 5 simd cores? and we still havent accounted the texture units l1 cache bandwidth or the global dta share bandwidth |

So the EDram is used as a cache instead, ok so what?

You still haven't explained how this cache could magically add extra performance to an already piss weak GPU, all you've done is remove the bandwidth bottleneck of the system, but now the GPU is the bottleneck.

The GPU could never use the potential of the EDram bandwidth anyways since it's a piss weak GPU, but to say that it can would mean that the WiiU GPU would now be more capable than the Titan Balck. ...and this just isn't reality.

megafenix said:

well, maybe trollings would still exis but a much lesser degree

ther is no point talking to him cause he only hears himeself and when you show him prove he justs plays fool and doesnt even check it, he is boring, not entertaining already privided proves but he aint answer my questions |

You didn't ask ANY questions, I'll be asking the question since your BS doesn't make any sense. (Hence why beyond 3D banned you for spouting your neverland lies. LOLOL)

fatslob-:O said:

If were megafenix were gone this place would be a better site by now ... |

Him leaving would do nothing to help this site either, it's much better for him to at least 'learn' and go along with reality, because then once he understands the error of his way, he would serve as an excellent antidote for anyone spouting such nonsense in face of reality, thus being truly useful to the site...just a thought.

jake_the_fake1 said:

So the EDram is used as a cache instead, ok so what? You still haven't explained how this cache could magically add extra performance to an already piss weak GPU, all you've done is remove the bandwidth bottleneck of the system, but now the GPU is the bottleneck. The GPU could never use the potential of the EDram bandwidth anyways since it's a piss weak GPU, but to say that it can would mean that the WiiU GPU would now be more capable than the Titan Balck. ...and this just isn't reality. |

as i said before, the answer is tesselation+displacement

bandwidth is not going to gie you more power, but the store and the speed for achieving render techniques that reuire lots of bandwidth an rapid access

tessleation+dispalcement can achieve about 400x more olygons by only trading off about 33fps or 30% performance, but the trade off still pretty good, of course that since the wii u is not a high end gpu tying to do this at 1080 would prove a challenge, not to mention that starves about half the memory bandwidth

here, and is based on a rv770 according to AMD

http://developer.amd.com/wordpress/media/2012/10/WileyAuthoringforTessellation.pdf

"

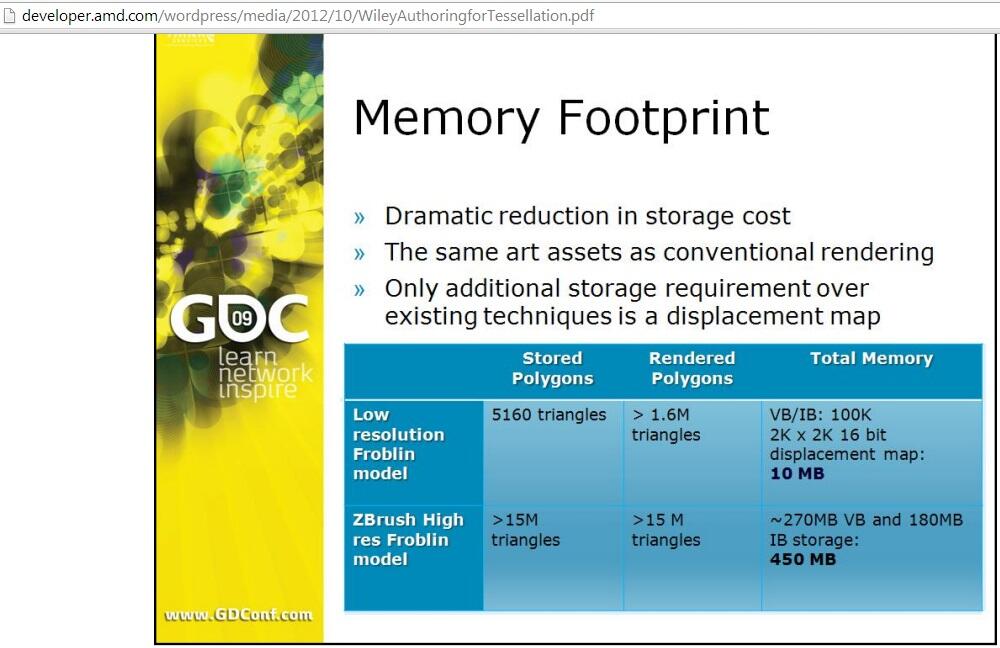

The first and most obvious benefit of real-time tessellation and

displacement mapping is the dramatic increase in visual

quality. Tessellation in conjunction with displacement mapping

eliminates one of the last hurdles towards achieving cinematic

quality visuals in games.Film has been using this technique

for years in order to provide animators with manageable

meshes to work with during the animation portion of

development while still providing the highest quality results at

render time. This technique eliminates polygonal artifacts and

provides highly detailed, smooth internal and external

silhouettes.

Another slightly less obvious benefit of this technique is the

effect that it has on your memory footprint. Essentially, you

can think of real-time tessellation and displacement mapping

as an effective form of geometry compression. This technique

utilizes the same art assets as conventional rendering with the

only additional storage requirement being the 16 bit

displacement map. If we take the Froblin character as an

example we see that the memory footprint for the low

resolution mesh and 2k x 2k 16 bit displacement map

requiring about 10 megabytes of video memory. This is

compared to the 450 megabytes of video memory that would

be required to render the high resolution Froblin model that

weighs in at around 15 million triangles. So, for just a

modest increase in memory footprint, we are able to

dramatically increase the total polycount and visual detail of

the render mesh when using this technique.

Another less obvious benefit of this technique is in animation

quality. Transforming the low resolution mesh is faster than

attempting to transform the high resolution equivalent. This

means that we get better animation performance. Also, as we

just saw in the previous slide, we are storing exponentially

fewer vertices in video memory. This means that we are able

to store more data per vertex. What this provides us, for

example, is the ability to increase skinning quality by being

able to store more influences per vertex. This would also

allows us to store a much larger library of morph targets for

better facial animation, etc

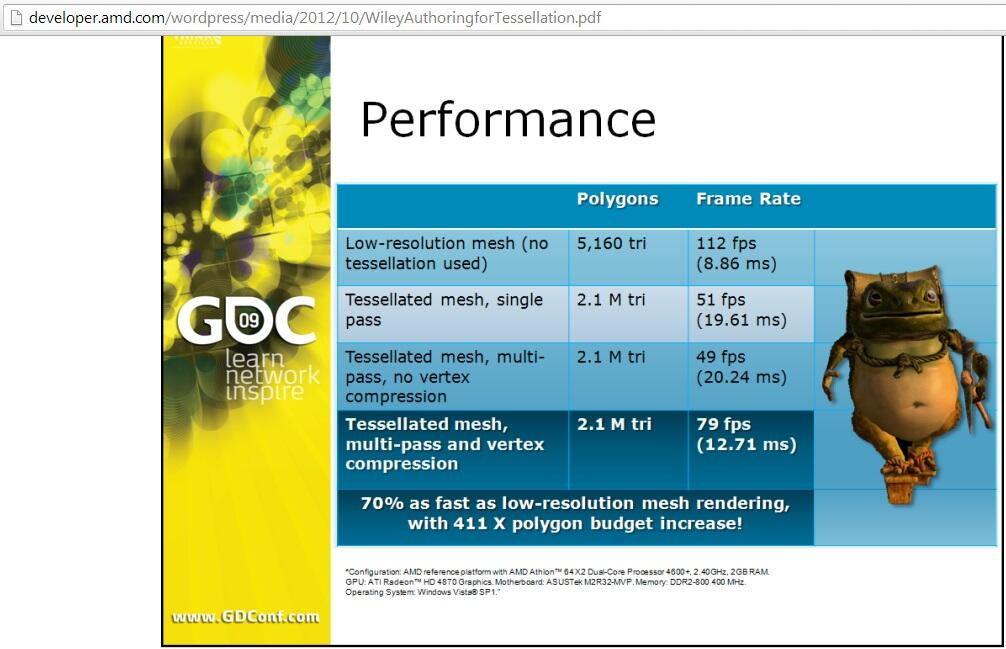

Performanceis yet another benefit of real-time tessellation and displacement mapping.You can see here that when implemented with multipassrendering and vertex compression we are able to render over 400 times as many polygons while only taking a 33 frames per second or 30 percent performance hit when compared to rendering only the corresponding low polygon mesh. That is a pretty good trade off.

"

jake_the_fake1 said:

|

Except me and Pemalite were trying to do that about 2 months ago and it got to no where ... (Go and ask him about it.)

He just won't listen and instead will pull numbers out the ass just to suit his pitiful enough arguments.

fatslob-:O said:

Except me and Pemalite were trying to do that about 2 months ago and it got to no where ... (Go and ask him about it.) He just won't listen and instead will pull numbers out the ass just to suit his pitiful enough arguments. |

hehe, like yourself ignoring afcts from renesas or shinen or ign or directly from microsoft?

yea right, you are so pitiful, you are just here to troll, just admit it already

the numbers and the formula aint invented, they are out there, yo jst have to nvestfate, bt e aleady kno yo ont do it, thats why your comments are irrelevant cause you ask for good answers and sources yet you dont ptovide them

provide a source instead of saying nonsenses