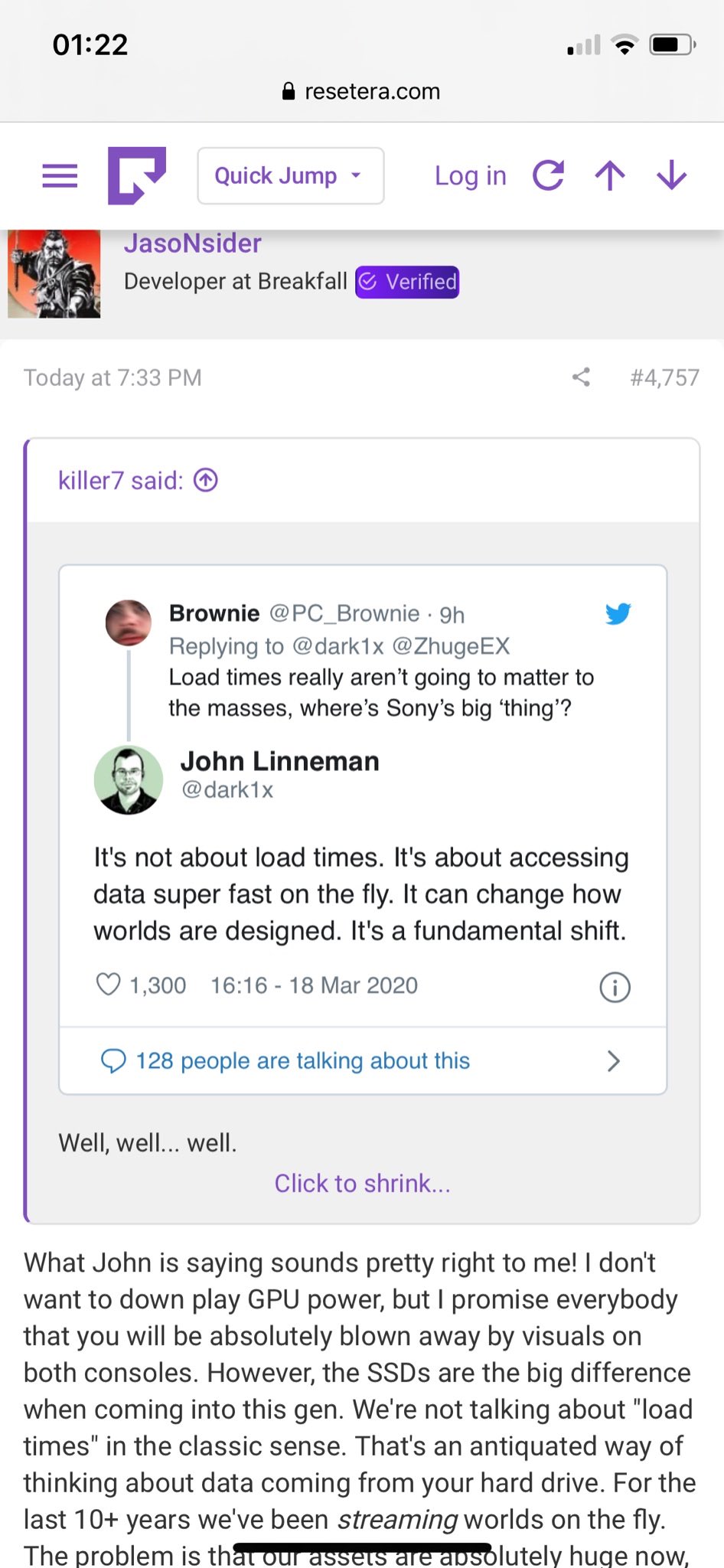

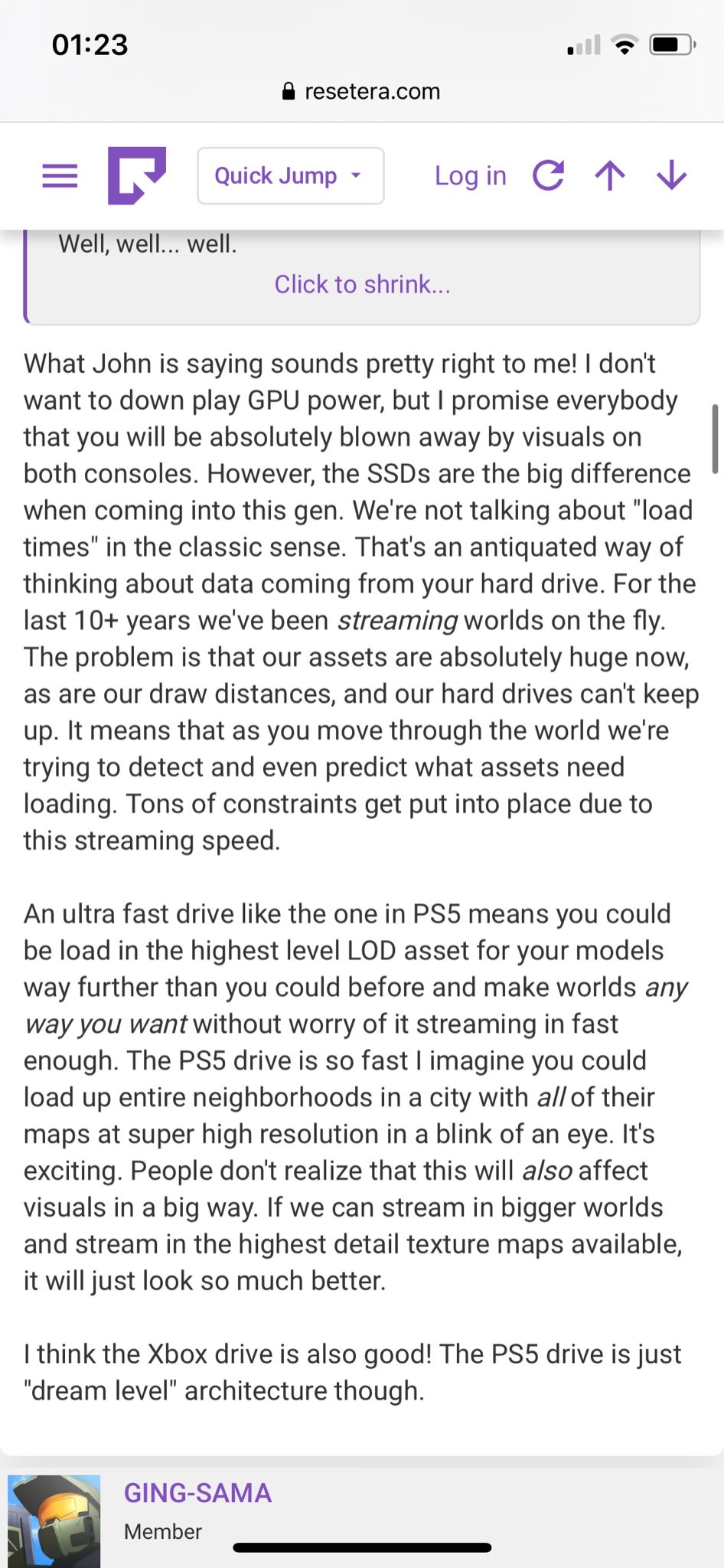

After reading a lot of game developer and expert alike across Internet and watching their video analysis , i can conclude both system are great. Both are different in term of application AMD hardware and using different method. I am not telling to this to hype PS5 alone or Xbox series X alone, because both are them are incredible, Developer will have different approach to making their games on the future.

This thread i will focus on PS5 because many downplaying PS5 spec and their system design. I will put a lot of game developer comment and expert from tweeter and forum.

Dollar bet: within a year from its launch gamers will fully appreciate that the PlayStation 5 is one of the most revolutionary, inspired home consoles ever designed, and will feel silly for having spent energy arguing about "teraflops" and other similarly misunderstood specs. 😘

— Andrea Pessino (@AndreaPessino) March 19, 2020

Most crucial part of the @cerny presentation imo. The SSD in the PS5 (and all the associated IO hardware) is going to fundamentally change how we design videogames by removing limitations we've been working around the last two gens. https://t.co/XDcj2BJ5gV

— Anthony Newman (@BadData_) March 18, 2020

Just saw the new @Playstation #PS5 presentation. Great job @cerny! Since I routinely have to explain to people why I'm excited for an SSD for rendering I thought I'd write a little thread to explain. Case in point: Uncharted 1 to Last of Us transition: pic.twitter.com/4Q75NEuigy

— Andrew Maximov (@_ArtIsAVerb) March 18, 2020

Just saw the new @Playstation #PS5 presentation. Great job @cerny! Since I routinely have to explain to people why I'm excited for an SSD for rendering I thought I'd write a little thread to explain. Case in point: Uncharted 1 to Last of Us transition: pic.twitter.com/4Q75NEuigy

— Andrew Maximov (@_ArtIsAVerb) March 18, 2020

Read the rest at this thread https://gamrconnect.vgchartz.com/thread.php?id=242074&page=1

Video Analysis :

https://www.theverge.com/2020/3/18/21185141/ps5-playstation-5-xbox-series-x-comparison-specs-features-release-date

EDIT:

OK guys another example is Resident Evil 3 Remake this games run slightly better resolution but worse frame rates on One X but run almost 60 fps on PS4 Pro with lower resolution the One X. Remember on paper both One X has 45 % advantage in terms FLOP ( plus One X has better memory setupand with UHD 4k). This is just shows in reality both machine has some thread off , some aspect can be run better some aspect can be run bad, they have plus and minus. Comparing PS5 and Series X will be even harder and imposible both even far closer than PS4 and Xbox One (41 %), and with both can produce native 4k and run VRS i believe both will run the same .

RE3 Demo has higher resolution on One X and higher framerate on PS4 Pro.

— Hunter 🎮 (@NextGenPlayer) March 20, 2020

Another classic example of why the power narrative is so tiring - most times games run amazingly well on both consoles, and often it's about tradeoffs. pic.twitter.com/EJXts8jWQQ

EDIT 2:

ANother example where DOOM Eternal on PS4 Pro running better than Xbox One X while the gap on raw TFLOPS about 42% in favor for ONE X and also has more memory (RAM) a, more bandwidth and faster CPU on ONE X. Another example that even at that level of disadvantage games can be run and look equal.

According to @digitalfoundry Doom’s Eternal actually runs better on PS4 Pro than the Xbox One X. (6TF vs. 4.2TF & both GCN Architecture) 🧐

— Josh (@LiquidTitan) March 22, 2020

With that in mind, I think PS5 and its “10.28 Teraflops” Navi would be just fine.

Just saying 🤷🏼♂️

(Video Credit: @digitalfoundry) pic.twitter.com/meRDAJeELL

As popular and confusing as that tweet has been, a 280 character hot take is neither useful nor educational. Here's a proper summary of the complications of judging a videogame console by its teraflop:https://t.co/OKb11fpcYM#gamedev

— Matt Phillips (@bigevilboss) March 22, 2020

" ... A quick look back at PS1 vs Saturn vs N64, Xbox vs Gamecube vs PS2, Xbox 360 vs PS3, and Xbox One vs PS4 shows countless examples of cross-platform games that should have run faster on one particular system when looking at the specs alone, but the benchmarks proved otherwise..."

"...We’re now in an era where major consoles are sharing the same underlying architecture, and some main system components comprise the same chips from the same manufacturer — some even the same model numbers — differing slightly by clock speeds, implementation, firmwares, and APIs. If we’ve not seen a slew of reliable and predictable FPS differences between previous machines so far, we’re certainly not going to suddenly see them moving forward. The gap is closing, not widening..."

"...

So, which computer would give the highest FPS in a game: one with a 2.0 teraflop processor in its GPU, or one with a 2.2 teraflop processor in its GPU? Now we need to look at different clock speeds, bus bandwidth, cache sizes, register counts, main and video RAM sizes, access latencies, core counts, northbridge implementation, firmwares, drivers, operating systems, graphics APIs, shader languages, compiler optimisations, overall engine architecture, data formats, and hundreds, maybe thousands of other factors. Each game team will utilise and tune the engine differently, constantly profiling and optimising based on conditions.

A 10% increase in any one of those individual stats isn’t going to give you a 10% increase in performance. The machine as a whole would need to be slightly increased to see an overall benefit, and you would only be able to determine the final gain by profiling each game, and even then it won’t scale linearly. If this was a consistent and predictable thing to calculate based on raw box stats, we wouldn’t need Digital Foundry..."

Last edited by HollyGamer - on 23 March 2020